MySQL 8.0 GA is right around the corner. I don't have precise information about its release, as I don't work at Oracle. If I did, I would probably know, but I couldn't tell when the release is scheduled to appear because of company policies. I can, however, speculate and infer, based of my experience with previous releases. My personal assessment is that the release will appear before 9:00am PT on April 24, 2018. The "before" can be anything from a few minutes to one week in advance.

Then, again, it may not happen at all if someone finds an atrocious bug that needs to be fixed asap.

Either way, users are keen on testing the new release in its current state of release candidate. Here I show a few methods that allow you to have a taste of the new goodies without waiting for the triumphal (keynote) announcement.

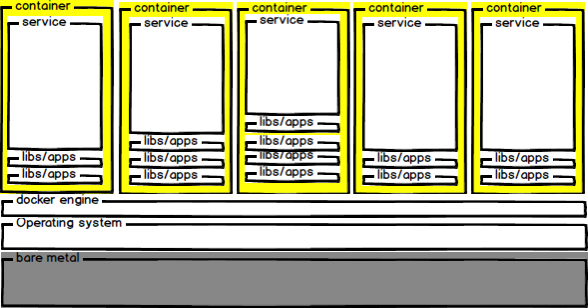

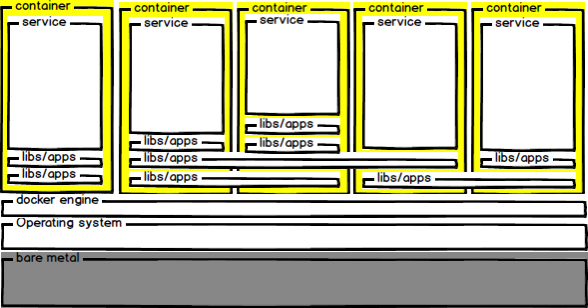

1. Docker containers

If you are a docker user, using a container to test MySQL is a no brainer. Unlike virtual machines or standalone servers, a docker container comes ready to use, with nothing to configure. All you need to do is pulling the right image. As with every docker images, you pull once and then use as many times as you need.

There are two reliable images that contain the latest MySQL. One is called mysql:8.0 and is tagged as official, which means that it is released by the Docker maintenance team. The other one, which is released by the MySQL team, is called mysql/mysql-server:8.0.

$ docker pull mysql:8.0

8.0: Pulling from library/mysql

Digest: sha256:7004063f8bd0c7bade8d1c526b9b8f5188c8288f411d76ee4ba83131e00c6f02

Status: Downloaded newer image for mysql:8.0

$ docker pull mysql/mysql-server:8.0

8.0: Pulling from mysql/mysql-server

Digest: sha256:e81d95f788adb04a4d2fa5f6f7e9283ca0f6360fb518efe65af5a7377a4ec282

Status: Downloaded newer image for mysql/mysql-server:8.0The mysql image is based on Debian, while the original package, as you would expect, is based on Oracle Linux.

Let's see how to run MySQL in a container.

$ docker run --name official -e MYSQL_ROOT_PASSWORD=secret -d mysql:8.0

60ec307578a139f5083ded07e94d737690d287b1b95093878675983a5cc40174

$ docker run --name original -e MYSQL_ROOT_PASSWORD=secret \

-d mysql/mysql-server:8.0

0c93bb4a97ffa53232a69732d3ae45413a443e38fa43ad6fdc4057168cba42d2With the above commands we get two containers, one for the official image and one for the original one.

We can't use them straight away, though. We need to wait for the servers to be ready. An easy method to verify the status of the server is looking at docker logs:

$ docker logs original --tail 1

2018-04-01T21:23:30.395461Z 0 [System] [MY-010931] /usr/sbin/mysqld: ready for connections. Version: '8.0.4-rc-log' socket: '/var/lib/mysql/mysql.sock' port: 3306 MySQL Community Server (GPL).

$ docker logs original --tail 1

2018-04-01T21:23:30.395461Z 0 [System] [MY-010931] /usr/sbin/mysqld: ready for connections. Version: '8.0.4-rc-log' socket: '/var/lib/mysql/mysql.sock' port: 3306 MySQL Community Server (GPL).Here, after about 10 seconds, both containers are ready to use. We can now access the servers. One easy method is through docker exec

$ docker exec -ti original mysql -psecret

mysql: [Warning] Using a password on the command line interface can be insecure.

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 15

Server version: 8.0.4-rc-log MySQL Community Server (GPL)

Copyright (c) 2000, 2018, Oracle and/or its affiliates. All rights reserved.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql>A similar command would allow us to access the other container.

If you want to try replication, more work is needed. In these articles you will find more details on Docker operations, and examples of advanced deployments:

- MySQL-Docker operations. - Part 1: Getting started with MySQL in Docker.

- MySQL-Docker operations. - Part 2: Customizing MySQL in Docker.

- MySQL-Docker operations. - Part 3: MySQL replication in Docker.

- a proposal for better MySQL security

- another security proposal

- MySQL docker containers: understanding the basics

2. Sandboxes

A sandboxed database is deployed in a non-dedicated box, with its configuration altered in such a way that it will run independently from other similar deployment and even from databases running in the main space.

The granddaddy of the sandbox deployer was MySQL-Sandbox, which has recently evolved into the more powerful and easier to use dbdeployer.

You can use MySQL-Sandbox to test a MySQL 8.0 tarball on MacOS

$ make_sandbox --export_binaries mysql-8.0.4-rc-macos10.13-x86_64.tar.gzThis command unpacks the tarball into $HOME/opt/mysql and deploys the database in $HOME/sandboxes/msb_8_0_4.

Until recently, the same command would work on Linux without modifications. In MySQL 8.0.4, though, the tarball organization for Linux has changed. There are symbolic links for SSL libraries inside the ./bin directory. Those symlinks are not extracted by default, but only if you use the option --keep-directory-symlink when opening the tarball. MySQL-Sandbox doesn't do it, also because this option is not standard to every version of tar.

Thus, if you want to use the old MySQL-Sandbox, you need to run the extraction manually.

$ cd $HOME/opt/mysql

$ tar -xzf --keep-directory-symlink /tmp/mysql-8.0.4-rc-linux-glibc2.12-x86_64.tar.gz

$ mv mysql-8.0.4-rc-linux-glibc2.12-x86_64 8.0.4

$ make_sandbox 8.0.4I don't recommend the above procedure, for either Linux or MacOS. The main reason, in addition to the manual operations involved, is that MySQL-Sandbox is not going to be updated for the time being. Instead, you should use dbdeployer, which has all the main features of MySQL-Sandbox and a lot of new ones. Here's the equivalent procedure:

$ dbdeployer unpack /tmp/mysql-8.0.4-rc-linux-glibc2.12-x86_64.tar.gz

$ dbdeployer deploy single 8.0.4

Database installed in $HOME/sandboxes/msb_8_0_4

run 'dbdeployer usage single' for basic instructions'

. sandbox server starteddbdeployer uses a different method to initialize the database server, which at the same time makes the initialization more visible and avoids the problem of the phantom SSL libraries.

Note: Tarballs for recent MySQL versions are really big. MySQL 8.0.4 binaries expand to 1.9 GB. If storage is an issue, you should get the tarballs from a collection of minimised tarballs (Linux only) for most MySQL versions. For now, it's maintained by me, but I hope that the the MySQL team will release something similar.

Once you have deployed a sandbox with MySQL 8.0, using it is easy:

$ cd $HOME/sandboxes/msb_8_0_4

$ ./use

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 8

Server version: 8.0.4-rc-log MySQL Community Server (GPL)

Copyright (c) 2000, 2018, Oracle and/or its affiliates. All rights reserved.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql [localhost] {msandbox} ((none)) >dbdeployer creates several shortcuts for the most common commands to use the database. ./use is the most common, and provides access to the MySQL client with all the necessary options needed to use it correctly. For more information on what is available, run

$ dbdeployer usage singleThis functionality would be enough to decide for a sandbox as your preferred method for testing. However, it this is only a tiny portion of what you can do with dbdeployer in your own computer. With a single command, you can test master/slave replication, multi-primary group replication, single primary group replication, fan-in, and all-masters topologies.

You can try the following commands:

$ dbdeployer deploy single 8.0.4

$ dbdeployer deploy replication 8.0.4

$ dbdeployer deploy replication 8.0.4 --topology=group

$ dbdeployer deploy replication 8.0.4 --topology=group --single-primary

$ dbdeployer deploy replication 8.0.4 --topology=all-masters

$ dbdeployer deploy replication 8.0.4 --topology=fan-inIf you have enough RAM, all these deployments will survive in parallel.

In my desktop, I can run:

$ dbdeployer sandboxes --header

name type version ports

---------------- ------- ------- -----

all_masters_msb_8_0_4 : all-masters 8.0.4 [15001 15002 15003]

fan_in_msb_8_0_4 : fan-in 8.0.4 [14001 14002 14003]

group_msb_8_0_4 : group-multi-primary 8.0.4 [20009 20134 20010 20135 20011 20136]

group_sp_msb_8_0_4 : group-single-primary 8.0.4 [21405 21530 21406 21531 21407 21532]

msb_8_0_4 : single 8.0.4 [8004]

rsandbox_8_0_4 : master-slave 8.0.4 [19009 19010 19011]When MySQL 8.0.11 is released, you can replace "8.0.4" with "8.0.11" and get a similar result.

BTW, you have seen that deploying replication sandboxes may take a long time. You may try adding --concurrent to each command, and enjoy a notable speed increase.

What else can you do with the sandboxes you have just deployed? Plenty! For a complete list, have a look at the online documentation. But for the moment, you may try this:

$ dbdeployer global status

$ dbdeployer global test

$ dbdeployer global test-replication3. Other methods

Besides the methods that I recommend, there are others that you could use, but I won't advise about them as there are more qualified ones for that.

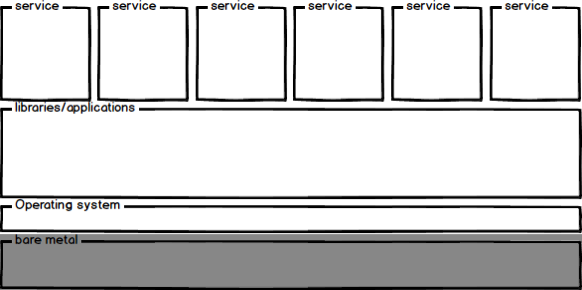

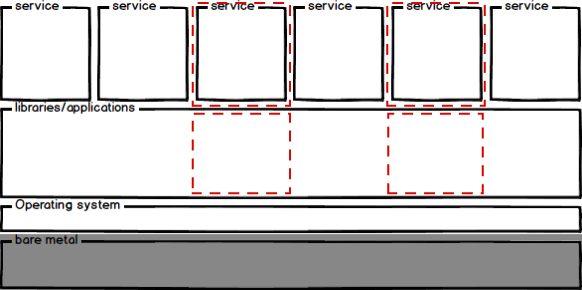

- Standalone server. If you have the luxury of having one or more standalone servers sitting in a lab, by all means go for it. Just follow the instructions about installing MySQL on your lucky server. Be advised, though, that depending on the method you choose and the version of your operating system, you may face compatibility issues (.rpm or .deb dependencies).

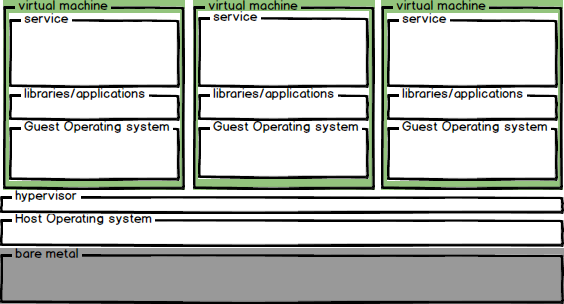

- Virtual machines. VMs share with standalone servers the same ease of installation (and the same dependency issues), only a bit slower. They are convenient, as you can use them to test in conditions that more closely resemble production settings, and if you use a configuration server such as Puppet or Ansible, your task of testing the new version could be greatly simplified. The instructions for the virtual machines are the same seen for standalone servers.