Working with replication, you come across many topologies, some of them sound and established, some of them less so, and some of them still in the realm of the hopeless wishes. I have been working with replication for almost 10 years now, and my wish list grew quite big during this time. In the last 12 months, though, while working at Continuent, some of the topologies that I wanted to work with have moved from the cloud of wishful thinking to the firm land of things that happen. My quest for star replication starts with the most common topology. One master, many slaves.

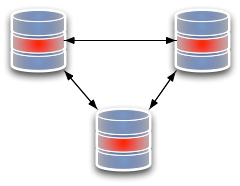

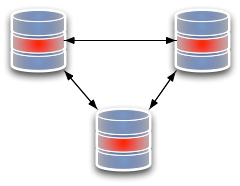

Fig 1. Master/Slave topology |  Legend |

It looks like a star, with the rays extending from the master to the slaves. This is the basis of most of the replication going on mostly everywhere nowadays, and it has few surprises. Setting aside the problems related to failing over and switching between nodes, which I will examine in another post, let's move to another star.

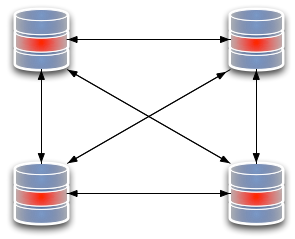

Fig 2. Fan-in slave, or multiple sources |

The

multiple source replication, also known as

fan-in topology, has several masters that replicate to the same slave. For years, this has been forbidden territory for me. But

Tungsten Replicator allows you to

create multiple source topologies easily. This is kind of uni-directional, though. I am also interested in topologies where I have more than one master, and I can retrieve data from multiple points.

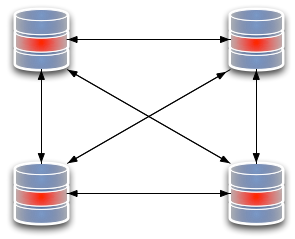

Fig 3. all-to-all three nodes |  Fig 4. All-to-all four nodes |

Tungsten

Multi-Master Installation solves this problem. It allows me to create topologies where every node replicates to every other node. Looking at the three-node scheme, it appears a straightforward solution. When we add one node, though, we see that the amount of network traffic grows quite a lot. The double sided arrows mean that there is a replication service at each end of the line, and two open data channels. When we move from three nodes to four, we double the replication services and the channels needed to sustain the scheme. For several months, I was content with this. I thought: it is heavy, but it works, and it's way more than what you can do with native replication, especially if you consider that you can have

a practical way of preventing conflicts using Shard Filters. But that was not enough. Something kept gnawing at me, and from time to time I experimented with Tungsten Replicator huge flexibility to create new topologies. But the star kept eluding me. Until … Until, guess what? a customer asked for it. The problem suddenly ceased to be a personal whim, and it became a business opportunity. Instead of looking at the issue in the idle way I often think about technology, I went at it with practical determination. What failed when I was experimenting in my free time was that either the pieces did not glue together the way I wanted, or I got an endless loop. Tungsten Replicator has a set of components that are conceptually simple. You deploy a pipeline between two points, open the tap, and data starts flowing in one direction. Even with multiple masters replication, the principle is the same. You deploy many pipes, and each one has one purpose only.

Fig 5. All-masters star topology |

In the star topology, however, you need to open more taps, but not too many, as you need to avoid the data looping around. The recipe, as it turned out, is to create a set of bi-directional replication systems, where you enable the central node slave services to get changes only from a specific master, and the slave services on the peripheral nodes to accept changes from any master. It was as simple as that. There are, of course, benefits and drawbacks with a star topology, compared to a all-replicate-to-all design. In the star topology, we create a single point of failure. If the central node fails, replication stops, and the central node needs to be replaced. Instead, the all-to-all design has no weaknesses. Its abundance of connections makes sure that, if a node fails, the system continues working without any intervention. There is no need for fail-over.

Fig 6. extending an all-to-all topology |  Fig 7. Extending a star topology |

However, there is a huge benefit in the node management. If you need to add a new node, it costs two services and two connections, while the same operation in the all-to-all replication costs 8 services and 8 connections. With the implementation of this topology, a new challenge has arisen. While conflict prevention by sharding is still possible, this is not the kind of scenario where you want to apply it. We have another conflict prevention mechanism in mind, and this new topology is a good occasion make it happen. YMMV. I like the additional choice. There are cases where a all-replicate-to-all topology is still the best option, and there are cases where a star topology is more advisable.

8 comments:

We have to build up a configuration where databases on different Servers will be syncronised (only in one direction) with one Server.

My question: is this possible with the replication feature?

overview:

Server 2 [db1]

Server 3 [db2] --> Server 4 [db1, db2, db3...db25]

Server 4 [db3]

...

Server 25[db25]( maximum 25 servers )

the arrow shows the data flow. On Server 4 read processes will be used for replicating tables.

Can you suggest me a better replication method for this particular senario.Thanks in advance. - Ganesh

Different servers replicating to one server is called "fan-in" or "multiple-source" replication .

Tungsten Replicator supports this topology. See this article for an example.

Giuseppe, Thanks so much for these threads. Your Tungsten posts have really helped me understand the product, which I am currently evaluating for the compant I work for.

I'm trying to get my head around the all-master star topology you discuss in this article and your comment about allowing the external nodes to accept updates from all servers. I have 3 VMs setup in my VirtualBox and I have node 1 as the central master, it is receiving and transmitting updates to nodes 2 and 3 via master-master bidirectional replication. I'm just trying to find the configuration that allows data inserted in node 2 to node 3 and vice versa without them being able to directly replicate between each other.

This is a requirement for our shop. If I can proof the concept, then my superiors are on board to buy licenses for Tungsten Enterprise.

I didn't see a config in your cookbook for the all-master star topology. Can you shed some light on which config parameter I need to enable or setup to allow such a case as outlined in this article?

Thanks

Jim,

The quickest way of seeing how the star topology works is by using Tungsten Sandbox with the --verbose option. It will show you how it is done.

tungsten-sandbox -m 5.5.24 --topology=star --hub=2 --verbose

./tools/tungsten-installer \

--master-slave \

--master-host=127.0.0.1 \

--cluster-hosts=127.0.0.1 \

--datasource-port=7101 \

--datasource-user=msandbox \

--datasource-password=msandbox \

--home-directory=/home/tungsten/tsb2/db1 \

--datasource-log-directory=/home/tungsten/sandboxes/tr_dbs/node1/data \

--datasource-mysql-conf=/home/tungsten/sandboxes/tr_dbs/node1/my.sandbox.cnf \

--service-name=alpha \

--thl-directory=/home/tungsten/tsb2/db1/tlogs \

--thl-port=12110 \

--rmi-port=10100 \

--start

./tools/tungsten-installer \

--master-slave \

--master-host=127.0.0.1 \

--cluster-hosts=127.0.0.1 \

--datasource-port=7102 \

--datasource-user=msandbox \

--datasource-password=msandbox \

--home-directory=/home/tungsten/tsb2/db2 \

--datasource-log-directory=/home/tungsten/sandboxes/tr_dbs/node2/data \

--datasource-mysql-conf=/home/tungsten/sandboxes/tr_dbs/node2/my.sandbox.cnf \

--service-name=bravo \

--thl-directory=/home/tungsten/tsb2/db2/tlogs \

--thl-port=12111 \

--rmi-port=10102 \

--start

./tools/tungsten-installer \

--master-slave \

--master-host=127.0.0.1 \

--cluster-hosts=127.0.0.1 \

--datasource-port=7103 \

--datasource-user=msandbox \

--datasource-password=msandbox \

--home-directory=/home/tungsten/tsb2/db3 \

--datasource-log-directory=/home/tungsten/sandboxes/tr_dbs/node3/data \

--datasource-mysql-conf=/home/tungsten/sandboxes/tr_dbs/node3/my.sandbox.cnf \

--service-name=charlie \

--thl-directory=/home/tungsten/tsb2/db3/tlogs \

--thl-port=12112 \

--rmi-port=10104 \

--start

/home/tungsten/tsb2/db1/tungsten/tools/configure-service \

-C \

--quiet \

--host=127.0.0.1 \

--datasource=127_0_0_1 \

--role=slave \

--service-type=remote \

--master-thl-host=127.0.0.1 \

--svc-start \

-a \

--svc-allow-any-remote-service=true \

--skip-validation-check=THLStorageCheck \

--local-service-name=alpha \

--master-thl-port=12111 bravo

/home/tungsten/tsb2/db2/tungsten/tools/configure-service \

-C \

--quiet \

--host=127.0.0.1 \

--datasource=127_0_0_1 \

--role=slave \

--service-type=remote \

--master-thl-host=127.0.0.1 \

--svc-start \

--skip-validation-check=THLStorageCheck \

--log-slave-updates=true \

--local-service-name=bravo \

--master-thl-port=12110 alpha

/home/tungsten/tsb2/db3/tungsten/tools/configure-service \

-C \

--quiet \

--host=127.0.0.1 \

--datasource=127_0_0_1 \

--role=slave \

--service-type=remote \

--master-thl-host=127.0.0.1 \

--svc-start \

-a \

--svc-allow-any-remote-service=true \

--skip-validation-check=THLStorageCheck \

--local-service-name=charlie \

--master-thl-port=12111 bravo

/home/tungsten/tsb2/db2/tungsten/tools/configure-service \

-C \

--quiet \

--host=127.0.0.1 \

--datasource=127_0_0_1 \

--role=slave \

--service-type=remote \

--master-thl-host=127.0.0.1 \

--svc-start \

--skip-validation-check=THLStorageCheck \

--log-slave-updates=true \

--local-service-name=bravo \

--master-thl-port=12112 charlie

i am testing star replication with three nodes/services ( 2.0.7-278 ).

alpha as the node.

bravo and charlie as spokes.

setting it up via cookbook.

entries to a spoke are only replicated to the other spoke if i manualy copy the meta-databases after installation; i.e. tungsten_charlie to node bravo and tungsten_bravo to node charlie.

am i doing something wrong or is it because i am german :-)

thanks in advance

Anton SIMAK...

I already deploy multimaster (alpha, chaelie, bravo) work like a charm, my question how if I want to add a new node (Delta as a master) while reolication in progress is it possible?

You can add a new node using ./cookbook/add_node_star

Please post your requests to http://groups.google.com/group/tungsten-replicator-discuss

We have a data-center in China and one in USA. Our app requires up to 1 gig of files attached to database records. We do not want to store the files as BLOBS within the DB, but on separate cheap disks. If MySQL were to be used, what would the recommended topology? How do we replicate the associated files across the continents, to be consistent?

Thank you,

Subu Mysore

Post a Comment